Abstract:

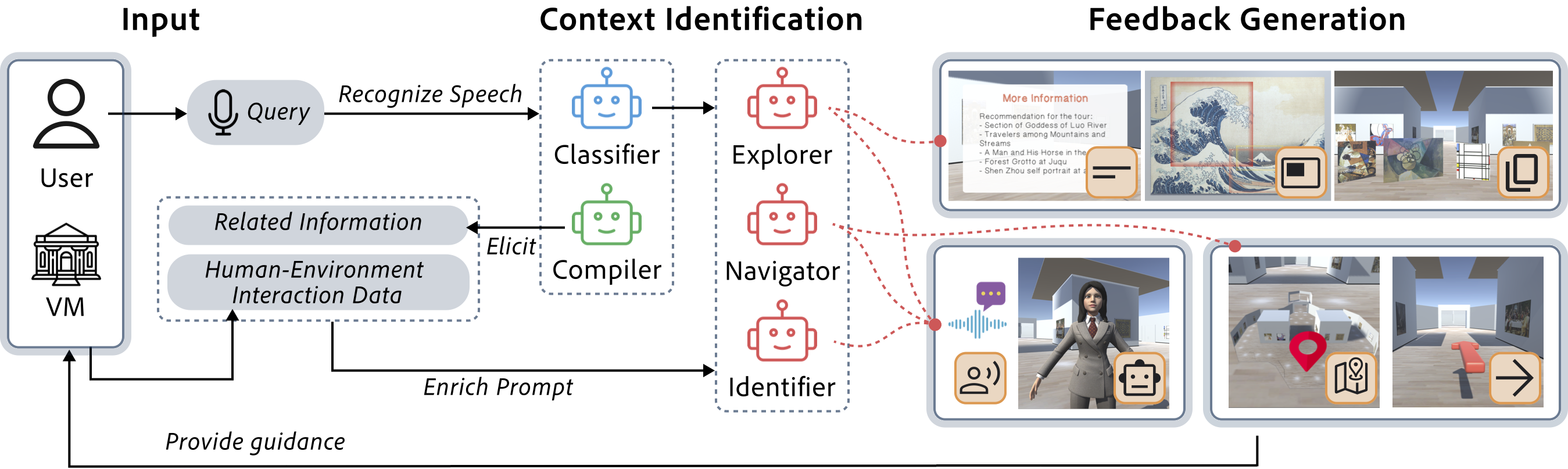

Tour guidance in virtual museums encourages multi-modal interactions to boost user experiences, concerning engagement, immersion, and spatial awareness. Nevertheless, achieving the goal is challenging due to the complexity of comprehending diverse user needs and accommodating personalized user preferences. Informed by a formative study that characterizes guidance-seeking contexts, we establish a multi-modal interaction design framework for virtual tour guidance.We then design VirtuWander, a two-stage innovative system using domain-oriented large language models to transform user inquiries into diverse guidance-seeking contexts and facilitate multi-modal interactions. The feasibility and versatility of VirtuWander are demonstrated with virtual guiding examples that encompass various touring scenarios and cater to personalized preferences. We further evaluate VirtuWander through a user study within an immersive simulated museum. The results suggest that our system enhances engaging virtual tour experiences through personalized communication and knowledgeable assistance, indicating its potential for expanding into real-world scenarios.

[Paper]

Video